DDPG and TD3 Algorithms

Embodied Intelligence Perspective: DDPG and TD3 are classic algorithms for continuous control tasks such as robotic arm control and dexterous hand manipulation. They use deterministic policies to directly output joint torques, combined with experience replay for off-policy training with relatively high sample efficiency.

DPG Algorithm

In classic policy gradient algorithms, we learn a stochastic policy

In certain cases, using a deterministic policy is more efficient. A deterministic policy directly maps a state to a specific action:

The corresponding policy gradient expression:

This is the core of the Deterministic Policy Gradient (DPG) algorithm.

DDPG Algorithm

Building on DPG, DDPG adds several key components:

OU Noise

The purpose of introducing noise is to enhance exploration. OU (Ornstein-Uhlenbeck) noise is a stochastic process with mean-reverting properties:

In the DDPG algorithm, the final action selection becomes:

Advantages of OU noise over Gaussian noise:

- Exploration: The persistent, autocorrelated nature makes exploration smoother and more stable

- Controllability: The mean-reverting property gradually reduces noise — more exploration early on, more exploitation later

- Stability: Avoids abrupt jittering and maintains action continuity

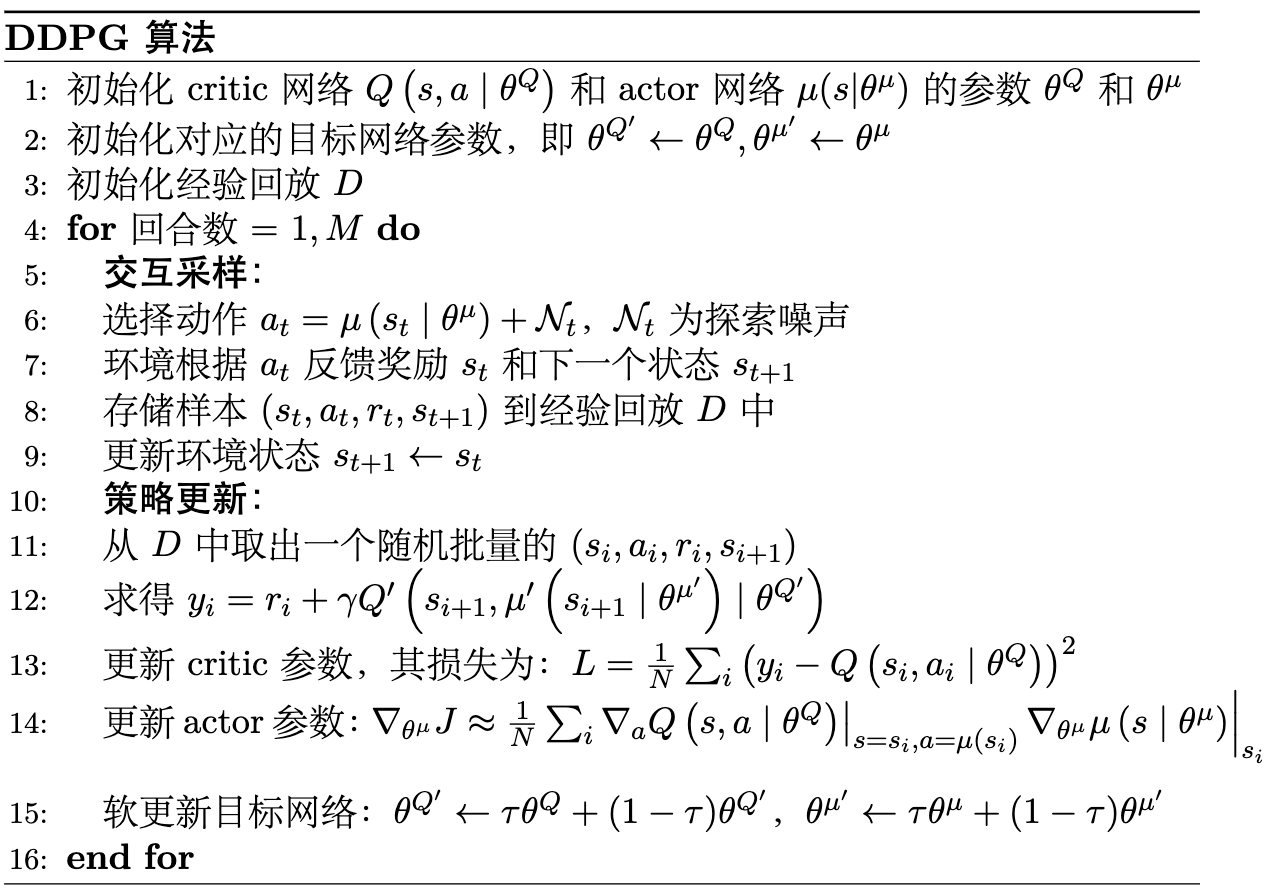

The overall flow of the DDPG algorithm is shown in Figure 1:

In DDPG, the target network uses soft update, allowing target network parameters to change smoothly, improving training stability and convergence.

TD3 Algorithm

DDPG is prone to Q-value overestimation, leading to unstable or even divergent policy learning. TD3 proposes three key improvements.

Twin Q-Networks

Two independent Critic networks are used to estimate action values. When computing target values, the smaller of the two

The corresponding loss function:

Delayed Updates

The Actor network is updated less frequently than the Critic network. Once the Critic learns more accurately, the Actor follows with updates. In practice, the Critic is updated 10 times for every 1 Actor update.

Target Policy Smoothing

Noise is added to the target policy when computing target values, along with clipping:

This noise regularization improves the Critic's robustness and stability.

Discussion

Is DDPG an off-policy algorithm?

Yes. DDPG combines experience replay, target networks, and a deterministic policy, making it a typical off-policy algorithm. This means it can reuse historical data, achieving higher sample efficiency, and is well-suited for use with simulation environments.

Summary of TD3 improvements over DDPG:

- Twin Q-networks → reduce Q-value overestimation

- Delayed updates → improve training stability

- Target policy smoothing → improve Critic robustness