PPO Algorithm

Embodied Intelligence Perspective: PPO is currently the most widely used RL algorithm in embodied intelligence. NVIDIA Isaac Gym uses PPO by default for training quadruped robot locomotion, dexterous hand manipulation, and other tasks; OpenAI used PPO to train a dexterous hand to solve a Rubik's cube. PPO's advantages lie in its simplicity, stability, and ease of hyperparameter tuning, making it the go-to baseline algorithm for embodied intelligence.

Importance Sampling

Before diving into the PPO algorithm, let us first introduce importance sampling. Suppose we need to sample from distribution

The ratio

PPO Core Idea

The core of PPO is using importance sampling to optimize the Actor-Critic policy gradient estimate. The objective function:

where the old policy distribution

Essentially, PPO adds importance sampling constraints on top of Actor-Critic, ensuring that each policy gradient estimate does not deviate too far from the current policy, thereby reducing variance and improving algorithm stability and convergence.

Clip Constraint

To ensure the importance weight does not deviate too far from 1, PPO uses a clip constraint:

where

KL-Penalty Constraint

An alternative approach adds a KL divergence penalty term to the loss:

In practice, the clip constraint is generally preferred because it is simpler, computationally cheaper, and also performs better.

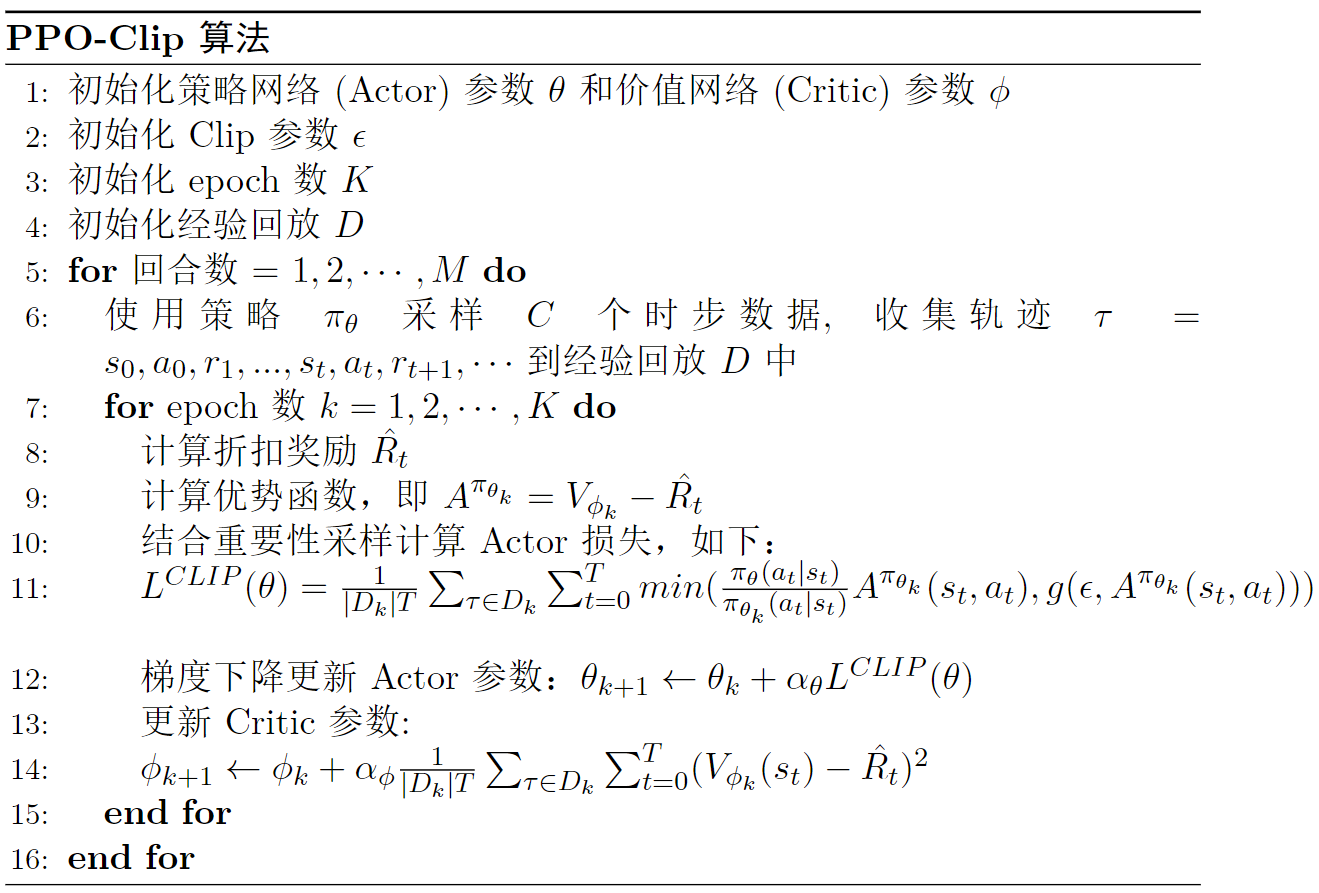

PPO Algorithm Pseudocode

PPO is On-Policy

A common misconception is that PPO is off-policy because it uses samples from the old policy. In reality, PPO uses importance sampling to correct for the data distribution discrepancy, allowing data sampled from the old policy to approximately represent current policy data. Therefore, PPO is on-policy.

This also means PPO's sample efficiency is lower than off-policy methods like SAC/TD3. However, in embodied intelligence, simulation environments (such as Isaac Gym) can perform massively parallel sampling, compensating for this disadvantage.

Discussion

Why is PPO so popular in embodied intelligence?

- Simple and stable: The clip constraint is easy to implement with good training stability

- Highly versatile: Works with both discrete and continuous action spaces

- Parallelization-friendly: The on-policy nature is naturally suited for multi-environment parallel sampling (Isaac Gym can run thousands of environments simultaneously)

- Easy to tune: Few hyperparameters with low sensitivity