SAC Algorithm

Embodied Intelligence Perspective: SAC is widely used in scenarios requiring high sample efficiency, such as robotic arm manipulation and dexterous hand control. Due to its off-policy nature, SAC can reuse historical data, making it particularly advantageous for real robots where sampling is expensive.

Maximum Entropy Reinforcement Learning

Deterministic vs Stochastic Policies

- Deterministic policies: Stable but lack exploration, prone to local optima (e.g., DQN, DDPG)

- Stochastic policies: Flexible but with higher variance (e.g., A2C, PPO)

Maximum entropy RL argues that even with mature stochastic policies, "optimally random" behavior has not been achieved. It introduces information entropy, maximizing the policy's entropy alongside the cumulative reward:

where

The more random the policy, the higher the entropy. The benefit of maximum entropy: when uncertain about which action is optimal, it tends to keep more options open, making the policy more robust.

Soft Q-Learning

Under the maximum entropy framework, the Q-value and V-value functions need to be redefined.

Soft Q-value function (with added entropy term):

Soft V-value function:

The V function is essentially a softmax form of the Q function (rather than hardmax) — this is where the "Soft" name comes from. When

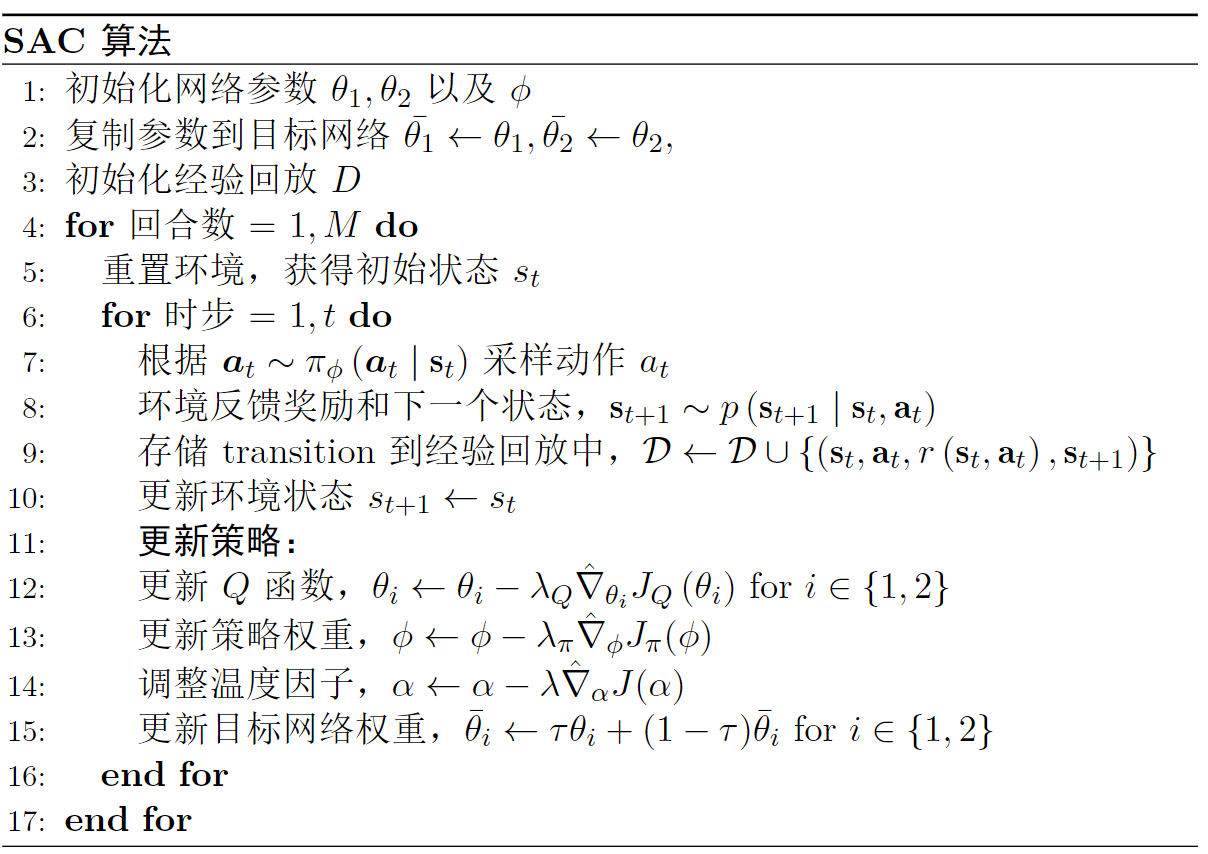

SAC Algorithm

SAC has two versions: v1 from 2018 and v2 from 2019. v2 mainly adds automatic temperature factor adjustment.

SAC v1 (2018)

SAC v1 contains three networks: V network, Q network, and policy network.

V network objective function:

Soft Q function objective:

Policy objective function:

SAC v2 (2019) — Automatic Temperature Tuning

In v1, the temperature factor

Through the Lagrangian multiplier method,

PPO vs SAC Comparison

| Feature | PPO | SAC |

|---|---|---|

| Policy type | on-policy | off-policy |

| Sample efficiency | Lower (requires many parallel environments) | Higher (can reuse historical data) |

| Experience replay | Not used | Used |

| Action space | Discrete + continuous | Primarily continuous |

| Exploration method | Policy stochasticity | Maximum entropy (more systematic) |

| Typical scenario | Large-scale parallel simulation | Real robots, small-batch data |

| Tuning difficulty | Simple | Simple (v2 automatic temperature) |

In embodied intelligence, if large-scale parallel simulation environments like Isaac Gym are available, PPO is preferred; if working with real robots or scenarios where simulation sampling is expensive, SAC is preferred.

Discussion

Why does maximum entropy improve policy robustness?

Maximum entropy encourages the policy to maintain probability across multiple viable actions, rather than concentrating all probability on a single action. This means:

- Even if one action is optimal in the training environment, the policy retains other backup actions

- When the environment changes slightly (sim-to-real gap), the policy still has other actions available

- This "conservative" randomness provides natural resistance to environmental uncertainty